Anthropic releases the AI fluency index to measure human collaboration

Anthropic has published a new study titled the AI fluency index, which provides a baseline for understanding how effectively people are using artificial intelligence tools. To create this benchmark, the company analyzed 9,830 anonymized conversations from the Claude platform. Instead of simply measuring how often people use the technology, the research focuses on 11 observable behaviors that indicate a deeper level of digital literacy and practical competence.

The report reveals that the most successful interactions occur when users treat the model as a thought partner rather than a simple search engine. A major finding is that 85.7 percent of the analyzed conversations involved iteration and refinement, meaning people are increasingly building upon initial answers rather than accepting the very first response. This conversational approach leads to significantly better outcomes, as users who iterate are much more likely to clarify goals, ask for specific formats, and question the reasoning behind the generated text.

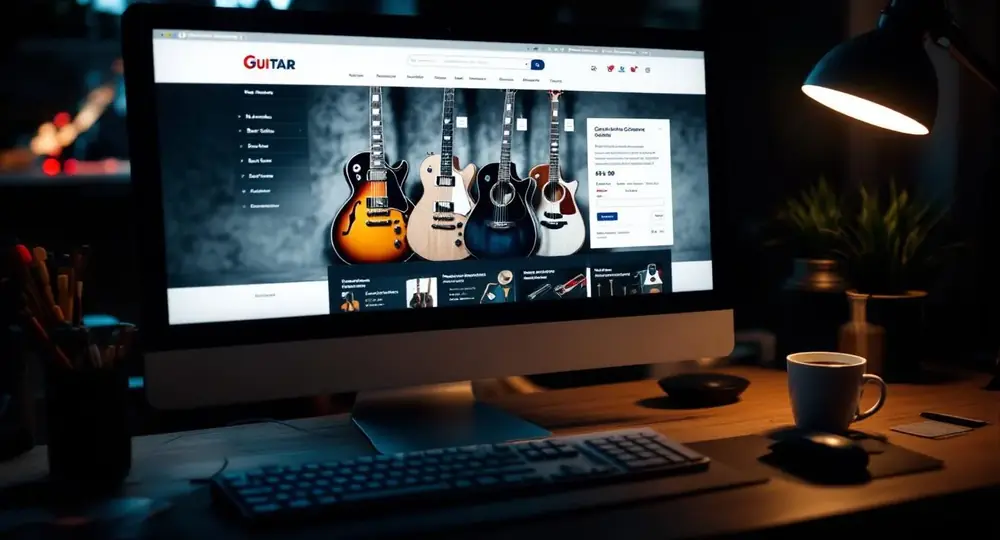

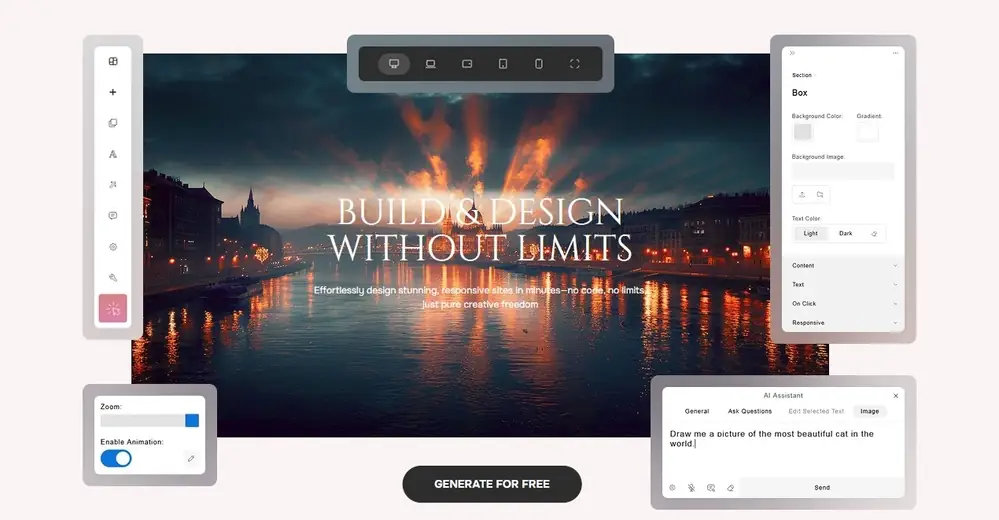

However, the study also uncovered a vulnerability in how people handle highly refined results. When the model delivers a polished artifact — such as a complex chart or a formatted document — users tend to drop their critical guard. The data shows a decrease in the likelihood that people will double-check facts or spot missing context when the output looks professional on the surface. To maintain a high standard of quality in your own projects while relying on smart automation, create a website on Closer AI website builder, where intuitive tools help guide your design process safely and efficiently.

The introduction of this index highlights a shift in the digital market from basic AI adoption to practical skill development. For consumers and professionals alike, the focus is moving toward learning how to actively manage and direct these systems. As the technology becomes a standard feature in everyday applications, the ability to critically evaluate and refine automated responses is emerging as a core requirement for navigating the modern internet. This means that future software and online services will likely prioritize interfaces that encourage active human collaboration rather than passive consumption.